You've reached the internet home of Chris Sells, who has a long history as a contributing member of the Windows developer community. He enjoys long walks on the beach and various computer technologies.

Sunday, Jan 4, 2015, 4:39 AM in The Spout .NET

Handling Orientation Changes in Xamarin.Forms Apps

By default, Xamarin.Forms handles orientation changes for you automatically, e.g.

![ss1[5] ss1[5]](http://www.sellsbrothers.com/posts/Image/259)

Xamarin.Forms handles orientation changes automatically

In this example, the labels are above the text entries in both the portrait and the landscape orientation, which Xamarin.Forms can do without any help from me. However, what if I want to put the labels to the left of the text entries in landscape mode to take better advantage of the space? Further, in the general case, you may want to have different layouts for each orientation. To be able to do that, you need to be able to detect the device’s current orientation and get a notification when it changes. Unfortunately, Xamarin.Forms provides neither, but luckily it’s not hard for you to do it yourself.

Finding the Current Orientation

To determine whether you’re in portrait or landscape mode is pretty easy:

static bool IsPortrait(Page p) { return p.Width < p.Height; }

This function makes the assumption that portrait mode has a smaller width. This doesn’t work for all future imaginable devices, of course, but in the case of a square device, you’ll just have to take your changes I guess.

Orientation Change Notifications

Likewise, Xamarin.Forms doesn’t have any kind of a OrientationChanged event, but I find that handling SizeChanged does the trick just as well:

SizeChanged += (sender, e) => Content = IsPortrait(this) ? portraitView : landscapeView;

The SizeChanged event seems to get called exactly once as the user goes from portrait to landscape mode (at least in my debugging, that was true). The different layouts can be whatever you want them to be. I was able to use this technique and get myself a little extra vertical space in my landscape layout:

Using a custom layout to put the labels on the left of the text entries instead of on top

Of course, I could use this technique to do something completely differently in each orientation, but I was hoping that the two layouts made sense to the user and didn’t even register as special, which Xamarin.Forms allowed me to do.

Friday, Jan 2, 2015, 7:36 PM in The Spout .NET

Launching the Native Map App from Xamarin.Forms

My goal was to take the name and address of a place and show it on the native map app regardless of what mobile platform on which my app was running. While Xamarin.Forms provides a cross-platform API to launch the URL that starts the map app, the URL format is different depending on whether you’re using the Windows Phone 8 URI scheme for Bing maps, the Android Data URI scheme for the map intent or the Apple URL scheme for maps.

This is what I came up with:

public class Place { public string Name { get; set; } public string Vicinity { get; set; } public Geocode Location { get; set; } public Uri Icon { get; set; } }public void LaunchMapApp(Place place) { // Windows Phone doesn't like ampersands in the names and the normal URI escaping doesn't help var name = place.Name.Replace("&", "and"); // var name = Uri.EscapeUriString(place.Name); var loc = string.Format("{0},{1}", place.Location.Latitude, place.Location.Longitude); var addr = Uri.EscapeUriString(place.Vicinity); var request = Device.OnPlatform( // iOS doesn't like %s or spaces in their URLs, so manually replace spaces with +s string.Format("http://maps.apple.com/maps?q={0}&sll={1}", name.Replace(' ', '+'), loc), // pass the address to Android if we have it string.Format("geo:0,0?q={0}({1})", string.IsNullOrWhiteSpace(addr) ? loc : addr, name), // WinPhone string.Format("bingmaps:?cp={0}&q={1}", loc, name) ); Device.OpenUri(new Uri(request)); }

This code was testing on several phone and tablet emulators and on 5 actual devices: an iPad running iOS 8, an iPad Touch running iOS 8, a Nokia Lumia 920 running Windows Phone 8.1, an LG G3 running Android 4.4 and an XO tablet running Android 4.1. As you can tell, each platform has not only it’s own URI format for launching the map app, but quirks as well. However, this code works well across platforms. Enjoy.

Thursday, Jan 1, 2015, 6:09 PM in The Spout .NET

App and User Settings in Xamarin.Forms Apps

Settings allow you to separate the parameters that configure the behavior of your app separate from the code, which allows you to change that behavior without rebuilding the app. This is handle at the app level for things like server addresses and API keys and at the user level for things like restoring the last user input and theme preferences. Xamarin.Forms provides direct support for neither, but that doesn’t mean you can’t easily add it yourself.

App Settings

Xamarin.Forms doesn’t have any concept of the .NET standard app.config. However, it’s easy enough to add the equivalent using embedded resources and the XML parser. For example, I built a Xamarin.Forms app for finding spots for coffee, food and drinks between where I am and where my friend is (MiddleMeeter, on GitHub). I’m using the Google APIs to do a bunch of geolocation-related stuff, so I need a Google API key, which I don’t want to publish on GitHub. The easy way to make that happen is to drop the API key into a separate file that’s loaded at run-time but to not check that file into GitHub by adding it to .gitignore. To make it easy to read, I added this file as an Embedded Resource in XML format:

Adding an XML file as an embedded resource makes it easy to read at run-time for app settings

I could’ve gone all the way and re-implemented the entire .NET configuration API, but that seemed like overkill, so I kept the file format simple:

<?xml version="1.0" encoding="utf-8" ?> <config> <google-api-key>YourGoogleApiKeyHere</google-api-key> </config>

Loading the file at run-time uses the normal .NET resources API:

string GetGoogleApiKey() { var type = this.GetType(); var resource = type.Namespace + "." +

Device.OnPlatform("iOS", "Droid", "WinPhone") + ".config.xml"; using (var stream = type.Assembly.GetManifestResourceStream(resource)) using (var reader = new StreamReader(stream)) { var doc = XDocument.Parse(reader.ReadToEnd()); return doc.Element("config").Element("google-api-key").Value; } }

I used XML as the file format not because I’m in love with XML (although it does the job well enough for things like this), but because LINQ to XML is baked right into Xamarin. I could’ve used JSON, too, of course, but that requires an extra NuGet package. Also, I could’ve abstracting things a bit to make an easy API for more than one config entry, but I’ll leave that for enterprising readers.

User Settings

While app settings are read-only, user settings are read-write and each of the supported Xamarin platforms has their own place to store settings, e.g. .NET developers will likely have heard of Isolated Storage. Unfortunately, Xamarin provides no built-in support for abstracting away the platform specifics of user settings. Luckily, James Montemagno has. In his Settings Plugin NuGet package, he makes it super easy to read and write user settings. For example, in my app, I pull in the previously stored user settings when I’m creating the data model for the view on my app’s first page:

class SearchModel : INotifyPropertyChanged { string yourLocation; // reading values saved during the last session (or setting defaults) string theirLocation = CrossSettings.Current.GetValueOrDefault("theirLocation", ""); SearchMode mode = CrossSettings.Current.GetValueOrDefault("mode", SearchMode.food); ... }

The beauty of James’s API is that it’s concise (only one function to call to get a value or set a default if the value is missing) and type-safe, e.g. notice the use of a string and an enum here. He handles the specifics of reading from the correct underlying storage mechanism based on the platform, translating it into my native type system and I just get to write my code w/o worrying about it. Writing is just as easy:

async void button1_Clicked(object sender, EventArgs e) { ... // writing settings values at an appropriate time CrossSettings.Current.AddOrUpdateValue("theirLocation", model.TheirLocation); CrossSettings.Current.AddOrUpdateValue("mode", model.Mode); ... }

My one quibble is that I wish the functions were called Read/Write or Get/Set instead of GetValueOrDefault/AddOrUpdateValue, but James’s function names make it very clear what’s actually happening under the covers. Certainly the functionality makes it more than worth the extra characters.

User Settings UI

Of course, when it comes to building a UI for editing user settings at run-time, Xamarin.Forms has all kinds of wonderful facilities, including a TableView intent specifically for settings (TableIntent.Settings). However, when it comes to extending the platform-specific Settings app, you’re on your own. That’s not such a big deal, however, since only iOS actually supports extending the Settings app (using iOS Settings Bundles). Android doesn’t support it at all (they only let the user configure things like whether an app has permission to send notifications) and while Windows Phone 8 has an extensible Settings Hub for their apps, it’s a hack if you do it with your own apps (and unlikely to make it past the Windows Store police).

Where Are We?

So, while Xamarin.Forms doesn’t provide any built in support for app or user settings, the underlying platform provides enough to make implementing the former trivial and the Xamarin ecosystem provides nicely for the latter (thanks, James!).

Even more interesting is what Xamarin has enabled with this ecosystem. They’ve mixed their very impressive core .NET and C# compiler implementation (Mono) with a set of mobile libraries providing direct access to the native platforms (MonoTouch and MonoDroid), added a core implementation of UI abstraction (Xamarin.Forms) and integration into the .NET developer’s IDE of choice (Visual Studio) together with an extensible, discoverable set of libraries (NuGet) that make it easy for 3rd party developers to contribute. That’s a platform, my friends, and it’s separate from the one that Microsoft is building. What makes it impressive is that it takes the army of .NET developers and points them at the current hotness, i.e. building iOS and Android apps, in a way that Microsoft never could. Moreover, because they’ve managed to also support Windows Phone pretty seamlessly, they’ve managed to get Microsoft to back them.

We’ll see how successful Xamarin is over time, but they certainly have a very good story to tell .NET developers.

Saturday, Nov 26, 2011, 11:16 AM in Tools .NET

REPL for the Rosyln CTP 10/2011

I don’t know what it is, but I’ve long been fascinated with using the C# syntax as a command line execution environment. It could be that PowerShell doesn’t do it for me (I’ve seriously tried half a dozen times or more). It could be that while LINQPad comes really close, I still don’t have enough control over the parsing to really make it work for my day-to-day command line activities. Or it may be that my friend Tim Ewald has always challenged csells to sell C shells by the sea shore.

Roslyn REPL

Whatever it is, I decided to spend my holiday time futzing with the Roslyn 2011 CTP, which is a set of technologies from Microsoft that gives you an API over your C# and VB.NET code.

Why do I care? Well, there are all kinds of cool code analysis and refactoring tools I could build with it and I know some folks are doing just that. In fact, at the BUILD conference, Anders showed off a “Paste as VB” command built with Roslyn that would translate C# to VB slick as you please.

For me, however, the first thing I wanted was a C# REPL environment (Read-Evaluate-Print-Loop). Of course, Roslyn ships out of the box with a REPL tool that you can get to with the View | Other Windows | C# Interactive Window inside Visual Studio 2010. In that code, you can evaluate code like the following:

> 1+1 2

> void SayHi() { Console.WriteLine("hi"); }

> SayHi();

hi

Just like modern dynamic languages, as you type your C# and press Enter, it’s executed immediately, even allowing you to drop things like semi-colons or even calls to WriteLine to get output (notice the first “1+1” expression). This is a wonderful environment in which to experiment with C# interactively, but just like LINQPad, it was a closed environment; the source was not provided!

The Roslyn team does provide a great number of wonderful samples (check the “Microsoft Codename Roslyn CTP - October 2011” folder in your Documents folder after installation). One in particular, called BadPainting, provides a text box for inputting C# that’s executed to add elements to a painting.

But that wasn’t enough for me; I wanted at least a Console-based command line REPL like the cool Python, JavaScript and Ruby kids have. And so, with the help of the Roslyn team (it pays to have friends in low places), I built one:

Building it (after installing Visual Studio 2010, Visual Studio 2010 SP1, the Visual Studio 2010 SDK and the Roslyn CTP) and running it lets you do the same things that the VS REPL gives you:

In implementing my little RoslynRepl tool, I tried to stay as faithful to the VS REPL as possible, including the help implementation:

If you’re familiar with the VS REPL commands, you’ll notice that I’ve trimmed the Console version a little as appropriate, most notably the #prompt command, which only has “inline” mode (there is no “margin” in a Console window). Other than that, I’ve built the Console version of REPL for Roslyn such that it works just exactly like the one documented in the Roslyn Walkthrough: Executing Code in the Interactive Window.

Building a REPL for any language is, at you might imagine, a 4-step process:

- Read input from the user

- Evaluate the input

- Print the results

- Loop around to do it again until told otherwise

Read

Step 1 is a simple Console.ReadLine. Further, the wonder and beauty of a Windows Console application is that you get complete Up/Down Arrow history, line editing and even obscure commands like F7, which brings up a list of commands in the history:

The reading part of our REPL is easy and has nothing to do with Roslyn. It’s evaluation where things get interesting.

Eval

Before we can start evaluating commands, we have to initialize the scripting engine and set up a session so that as we build up context over time, e.g. defining variables and functions, that context is available to future lines of script:

using Roslyn.Compilers; using Roslyn.Compilers.CSharp; using Roslyn.Compilers.Common; using Roslyn.Scripting; using Roslyn.Scripting.CSharp;

...

// Initialize the engine

string[] defaultReferences = new string[] { "System", ... }; string[] defaultNamespaces = new string[] { "System", ... }; CommonScriptEngine engine = new ScriptEngine(defaultReferences, defaultNamespaces);

// HACK: work around a known issue where namespaces aren't visible inside functions foreach (string nm in defaultNamespaces) { engine.Execute("using " + nm + ";", session); } Session session = Session.Create();

Here we’re creating a ScriptEngine object from the Roslyn.Scripting.CSharp namespace, although I’m assigning it to the base CommonScriptEngine class which can hold a script engine of any language. As part of construction, I pass in the same set of assembly references and namespaces that a default Console application has out of the box and that the VS REPL uses as well. There’s also a small hack to fix a known issue where namespaces aren’t visible during function definitions, but I expect that will be unnecessary in future drops of Roslyn.

Once I’ve got the engine to do the parsing and executing, I creating a Session object to keep context. Now we’re all set to read a line of input and evaluate it:

(true) { Console.Write("> "); var input = new StringBuilder(); while (true) { string line = Console.ReadLine(); if (string.IsNullOrWhiteSpace(line)) { continue; } // Handle #commands ... // Handle C# (include #define and other directives) input.AppendLine(line); // Check for complete submission if (Syntax.IsCompleteSubmission(ParseOptions interactiveOptions =... while

new ParseOptions(kind: SourceCodeKind.Interactive,

languageVersion: LanguageVersion.CSharp6);

SyntaxTree.ParseCompilationUnit(

input.ToString(), options: interactiveOptions))) {

break;

} Console.Write(". "); } Execute(input.ToString()); }

The only thing we’re doing that’s at all fancy here is collecting input over multiple lines. This allows you to enter commands over multiple lines:

The IsCompleteSubmission function is the thing that checks whether the script engine will have enough to figure out what the user meant or whether you need to collect more. We do this with a ParseOptions object optimized for “interactive” mode, as opposed to “script” mode (reading scripts from files) or “regular” mode (reading fully formed source code from files). The “interactive” mode lets us do things like “1+1” or “x” where “x” is some known identifier without requiring a call to Console.WriteLine or even a trailing semi-colon, which seems like the right thing to do in a REPL program.

Once we have a complete command, single or multi-line, we can execute it:

public void Execute(string s) { try { Submission<object> submission = engine.CompileSubmission<object>(s, session); object result = submission.Execute(); bool hasValue; ITypeSymbol resultType = submission.Compilation.GetSubmissionResultType(out hasValue); // Print the results ... } catch (CompilationErrorException e) { Error(e.Diagnostics.Select(d => d.ToString()).ToArray()); } catch (Exception e) { Error(e.ToString()); } }

Execution is a matter of creating a “submission,” which is a unit of work done by the engine against the session. There are helper methods that make this easier, but we care about the output details so that we can implement our REPL session.

Printing the output depends on the type of a result we get back:

ObjectFormatter formatter =...

new ObjectFormatter(maxLineLength: Console.BufferWidth, memberIndentation: " ");

Submission<object> submission = engine.CompileSubmission<object>(s, session); object result = submission.Execute(); bool hasValue; ITypeSymbol resultType =

submission.Compilation.GetSubmissionResultType(out hasValue); // Print the results if (hasValue) { if (resultType != null && resultType.SpecialType == SpecialType.System_Void) { Console.WriteLine(formatter.VoidDisplayString); } else { Console.WriteLine(formatter.FormatObject(result)); } }

As part of the result output, we’re leaning on an instance of an “object formatter” which can trim things for us to the appropriate length and, if necessary, indent multi-line object output.

In the case that there’s an error, we grab the exception information and turn it red:

void Error(params string[] errors) { var oldColor = Console.ForegroundColor; Console.ForegroundColor = ConsoleColor.Red; WriteLine(errors); Console.ForegroundColor = oldColor; }

public void Write(params object[] objects) { foreach (var o in objects) { Console.Write(o.ToString()); } } void WriteLine(params object[] objects) { Write(objects); Write("\r\n"); }

Loop

And then we do it all over again until the program is stopped with the #exit command (Ctrl+Z, Enter works, too).

Where Are We?

Executing lines of C# code, the hardest part of building a C# REPL, has become incredibly easy with Roslyn. The engine does the parsing, the session keeps the context and the submission gives you extra information about the results. To learn more about scripting in Roslyn, I recommend the following resources:

- Roslyn on MSDN

- The REPL forum for Roslyn

- C# as a Scripting Language in Your .NET Applications Using Roslyn, Anoop Madhusudanan, codeproject.com, 10/24/2011

Now I’m off to add Intellisense support. Wish me luck!

Sunday, May 23, 2010, 8:40 PM in .NET

Spurious MachineToApplication Error With VS2010 Deployment

Often when I'm building my MVC 2 application using Visual Studio 2010, I get the following error:

It is an error to use a section registered as allowDefinition='MachineToApplication' beyond application level. This error can be caused by a virtual directory not being configured as an application in IIS.

On the internet, this error seems to be related to having a nested web.config in your application. I do have such a thing, but it's just the one that came out of the MVC 2 project item template and I haven't touched it.

In my case, this error in my case doesn't seem to have anything to do with a nested web.config. This error only started to happen when I began using the web site deployment features in VS2010 which by itself, rocks (see Scott Hanselman's "Web Deployment Made Awesome: If You're Using XCopy, You're Doing It Wrong" for details).

If it happens to you and it doesn't seem to make any sense, you can try to fix it with a Build Clean command. If you're using to previous versions of Visual Studio, you'll be surprised, like I was, not to find a Clean option in sparse the Build menu. Instead, you can only get to it by right-clicking on your project in the Solution Explorer and choosing Clean.

Doing that, however, seems to make the error go away. I don't think that's a problem with my app; I think that's a problem with VS2010.

Monday, Apr 5, 2010, 10:33 PM in .NET

The performance implications of IEnumerable vs. IQueryable

It all started innocently enough. I was implementing a "Older Posts/Newer Posts" feature for my new web site and was writing code like this:

IEnumerable<Post> FilterByCategory(IEnumerable<Post> posts, string category) {

if( !string.IsNullOrEmpty(category) ) {

return posts.Where(p => p.Category.Contains(category));

}

}

...

var posts = FilterByCategory(db.Posts, category);

int count = posts.Count();

...

The "db" was an EF object context object, but it could just as easily been a LINQ to SQL context. Once I ran this code, it failed at run-time with a null reference exception on Category. "That's strange," I thought. "Some of my categories are null, but I expect the 'like' operation in SQL to which Contains maps to skip the null values." That should've been my first clue.

Clue #2 was when I added the null check into my Where expression and found that their were far fewer results than I expected. Some experimentation revealed that the case of the category string mattered. "Hm. That's really strange," I thought. "By default, the 'like' operation doesn't care about case." Second clue unnoticed.

My 3rd and final clue was that even though my site was only showing a fraction of the values I knew where in the database, it had slowed to a crawl. By now, those of you experienced with LINQ to Entities/SQL are hollering from the audience: "Don't go into the woods alone! IEnumerable kills all the benefits of IQueryable!"

See, what I'd done was unwittingly switched from LINQ to Entities, which takes my C# expressions and translates them into SQL, and was now running LINQ to Objects, which executes my expressions directly.

"But that can't be," I thought, getting hot under the collar (I was wearing a dress shirt that day -- the girlfriend likes me to look dapper!). "To move from LINQ to Entities/SQL to LINQ to Objects, I thought I had to be explicit and use a method like ToList() or ToArray()." Au contraire mon fraire (the girlfriend also really likes France).

Here's what I expected to be happening. If I have an expression like "db.Posts" and I execute that expression by doing a foreach, I expect the SQL produced by LINQ to Entities/SQL to look like this:

select * from Posts

If I add a Where clause, I expect the SQL to be modified:

select * from Posts where Category like '%whatever%'

Further, if I do a Count on the whole thing, e.g.

db.Posts.Where(p => p.Contains(category)).Count()

I expect that to turn into the following SQL:

select count(*) from Posts where Category like '%whatever%'

And that's all true if I keep things to just "var" but I wasn't -- I was being clever and building functions to build up my queries. And because I couldn't use "var" as a function parameter, I had to pick a type. I picked the wrong one: IEnumerable.

The problem with IEnumerable is that it doesn't have enough information to support the building up of queries. Let's take a look at the extension method of Count over an IEnumerable:

public static int Count<TSource>(this IEnumerable<TSource> source) {

...

int num = 0;

using (IEnumerator<TSource> enumerator = source.GetEnumerator()) {

while (enumerator.MoveNext()) { num++; }

}

return num;

}

See? It's not composing the source IEnumerable over which it's operating -- it's executing the enumerator and counting the results. Further, since our example IEnumerator was a Where statement, which was in turn a accessing the list of Posts from the database, the effect was filtering in the Where over objects constituted from the following SQL:

select * from Posts

How did I see that? Well, I tried hooking up the supremely useful SQL Profiler to my ISP's database that was holding the data, but I didn't have permission. Luckily, the SQL tab in LinqPad will show me what SQL is being executed and it showed me just that (or rather, the slightly more verbose and more correct SQL that LINQ to Entities generates in these circumstances).

Now, I had a problem. I didn't want to pass around IEnumerable, because clearly that's slowing things down. A lot. On the other hand, I don't want to use ObjectSet<Post> because it doesn't compose, i.e. Where doesn't return that. What is the right interface to use to compose separate expressions into a single SQL statement? As you've probably guessed by now from the title of this post, the answer is: IQueryable.

Unlike IEnumerable, IQueryable exposes the underlying expression so that it can be composed by the caller. In fact, if you look at the IQueryable implementation of the Count extension method, you'll see something very different:

public static int Count<TSource>(this IQueryable<TSource> source) {

...

return source.Provider.Execute<int>(

Expression.Call(null,

((MethodInfo) MethodBase.GetCurrentMethod()).

MakeGenericMethod(

new Type[] { typeof(TSource) }),

new Expression[] { source.Expression }));

}

This code isn't exactly intuitive, but what's happening is that we're forming an expression which is composed of whatever expression is exposed by the IQueryable we're operating over and the Count method, which we're then implementing. To get this code path to execute for our example, we simply have to replace the use of IEnumerable with IQueryable:

IQueryable<Post> FilterByCategory(IQueryable<Post> posts, string category) {

if( !string.IsNullOrEmpty(category) ) {

return posts.Where(p => p.Category.Contains(category));

}

}

...

var posts = FilterByCategory(db.Posts, category);

int count = posts.Count();

...

Notice that none of the actual code changes. However, this new code runs much faster and with the case- and null-insensitivity built into the 'like' operator in SQL instead of semantics of the Contains method in LINQ to Objects.

The way it works is that we stack one IQueryable implementation onto another, in our case Count works on the Where which works on the ObjectSet returned from the Posts property on the object context (ObjectSet itself is an IQueryable). Because each outer IQueryable is reaching into the expression exposed by the inner IQueryable, it's only the outermost one -- Count in our example -- that causes the execution (foreach would also do it, as would ToList() or ToArray()).

Using IEnumerable, I was pulling back the ~3000 posts from my blog, then filtering them on the client-side and then doing a count of that.With IQueryable, I execute the complete query on the server-side:

select count(*) from Posts where Category like '%whatever%'

And, as our felon friend Ms. Stewart would say: "that's a good thing."

Sunday, Feb 7, 2010, 12:02 PM in .NET

Data Binding, Currency and the WPF TreeView

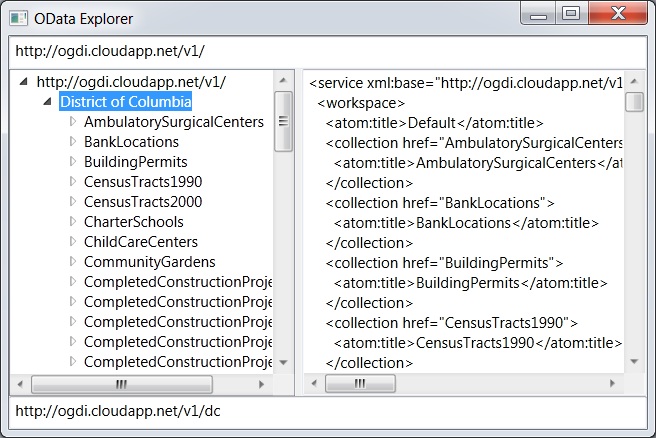

I was building a little WPF app to explore a hierarchical space (OData, if you must know), so of course, I was using the TreeView. And since I'm a big fan of data binding, of course I've got a hierarchical data source (basically):

abstract class Node {

public abstract string Name { get; }

public abstract IEnumerable<Node> { get; }

public XDocument Document { get { ... } }

public Uri Uri { get { ... } }

}

I then bind to the data source (of course, you can do this in XAML, too):

// set the data context of the grid containing the treeview and text boxes

grid.DataContext = new Node[] { Node.GetNode(new Uri(uri)) };

// bind the treeview to the entire collection of nodes

leftTreeView.SetBinding(TreeView.ItemsSourceProperty, ".");

// bind each text box to a property on the current node

queryTextBox.SetBinding(TextBox.TextProperty,

new Binding("Uri") { Mode = BindingMode.OneWay });

documentTextBox.SetBinding(TextBox.TextProperty,

new Binding("Document") { Mode = BindingMode.OneWay });

What we're trying to do here is leverage the idea of "currency" in WPF where if you share the same data context, then item controls like textboxes will bind to the "current" item as it's changed by the list control. If this was a listview instead of a treeview, that would work great (so long as you set the IsSynchronizedWithCurrentItem property to true).

The problem, as my co-author and the-keeper-of-all-WPF-knowledge Ian Griffiths reminded me this morning, is that currency is based on a single collection, whereas a TreeView control is based on multiple collections, i.e. the one at the root and each one at sub-node, etc. So, as I change the selection on the top node, the treeview has no single collection's current item to update (stored in an associated "view" of the data), so it doesn't update anything. As the user navigates from row to row, the "current" item never changes and our textboxes are not updated.

So, Ian informed me of a common "hack" to solve this problem. The basic idea is to forget about the magic "current node" and explicitly bind each control to the treeview's SelectedItem property. As it changes, regardless of which collection from whence the item came, each item control is updated, as data binding is supposed to work.

First, instead of setting the grid's DataContext to the actual data, shared with the treeview and the textboxes, we bind it to the currently selected treeview item:

// bind the grid containing the treeview and text boxes

// to point at the treeview's currently selected item

grid.DataContext = new Binding("SelectedItem") { ElementName = "leftTreeView" };

Now, because we want the treeview to in fact show our hierarchical collection of nodes, we set it's DataContext explicitly:

// set the treeview's DataContext to be the data we want it to show

leftTreeView.DataContext = new Node[] { Node.GetNode(new Uri(uri)) };

Now, the treeview will show the data we wanted it to show, as before, but as the user changes the selection, the treeview's SelectedItem property changes, which updates the grid's DataContext, which signals the textboxes, bound to properties on grid's DataContext (because the DataContext property is inherited and we haven't overridden it on the textboxes), and the textboxes are updated.

Or, in other words, the textboxes effectively have a new idea of the "current" item that meshes with how the treeview works. Thanks, Ian!

Wednesday, Feb 6, 2008, 2:22 PM in .NET

.NET Source Code Mass Downloader

On 1/16/08, Microsoft announced the ability to download some of the .NET Framework source code for debugging. This download process was only supported inside of a properly configured Visual Studio 2008.

21 Days Later: Kerem Kusmezer and John Robbins released a tool to download the source code en mass. Frankly, I'm surprised it took so long. : )

Wednesday, Dec 26, 2007, 8:42 PM in .NET

Microsoft needs you to build Emacs.Net

Interested? Drop Doug a line.

Friday, Aug 17, 2007, 1:42 PM in .NET

Duck Typing for .NET!

For structural typing fans (and they'll be more of you over time -- trust me), David Meyer has posted a duck typing library for .NET. There are many reasons this is cool, but in summary, it allows for many of the dynamic features of languages like Python and Ruby to used used in any .NET language. Very cool.

Sunday, May 6, 2007, 12:58 PM in .NET

Lutz's Silverlight 1.1 Alpha Samples

Lutz has ported some of his .NET code to use the Silverlight 1.1 alpha, which includes the mini-CLR (or whatever we're calling it these days : ). Enjoy.

Monday, Apr 16, 2007, 4:50 PM in .NET

WPF/E == Silverlight

If you haven't already heard about Microsoft's new high fidelity, cross-platform application development platform, you just haven't been paying attention. Silverlight is the new name for WPF/E (although it's still XAML-based) and does some *amazing* things.

And, if you can wait just a little while longer, you can read about Silverlight in Programming WPF (available now in Rough Cut format and for pre-order from Amazon) in an appendix by my friend and yours, Shawn Wildermuth. Shawn's been doing a ton of Silverlight work lately, including doing a bunch of Silverlight presentations for Microsoft, so he knows of what he speaks.

Thursday, Feb 1, 2007, 4:58 PM in .NET

The Potential of WPF/E

Savas turned me onto an amazing WPF/E application. I don't speak the language of the web site, but the screenshot on Savas's site is worth a look...

P.S. I don't smoke (except for the occasional cigar) and I definitely don't want to smell smoke while I eat or in my clothes, but the fact that smokers are no longer allowed to smoke most places strikes me as a violation of an important liberty. Have those studies about the effects of 3rd party smoke been verified?

Tuesday, Jan 30, 2007, 3:12 PM in .NET

WPF XBAP App: British Library Books Online

"The British Library is one of the world's leading libraries and the national library of the United Kingdom. By charter, it holds a copy of every book ever published in the UK, along with 58 million newspapers, 4.5 million maps, and 3.5 million sound recordings. They hold some of the most priceless literary treasures in existence, including the Codex Sinaiticus (one of the oldest New Testaments in existence), the Lindisfarne Gospels, one of Leonardo Da Vinci's notebooks, the first atlas of Europe by Mercator, the original illustrated manuscript Lewis Carroll's Alice's Adventures in Wonderland, Jane Austen's History of England and Mozart's musical diary. ...

"Enter a fantastic new application, developed in partnership between the British Library and Armadillo Systems. The British Library have digitized the pages of fifteen of their most valuable works and created Turning the Pages, a browser-based WPF application that allows you to interact with these books in a virtual environment from the comfort of your home."

Wow. This is literally the only way to interact with some of this material and it's enabled with WPF. Nice.

Friday, Jan 26, 2007, 3:24 PM in .NET

API Usability

Don has a piece up about something that I've always called "API Usability." The idea when building libraries is to write client code first against some pretend API that you wish existed and then to implement that API. Another good name for this approach would be "RAD API Design," simply because it's the same way I prefer to design UI -- layout the UI the way you'd like it to look and then implement it that way. Of course, I have to admit to preferring Don's name for this style of programming (I like what he calls my conferences, too : ).

BTW, the comments to Don's piece mention to startling similarity between this approach and Test-Driven Development (TDD). I'm a huge fan of TDD (NUnit is a wonderful tool I use all day every day). I'd say that TDD is a generalization of my little "API usability" technique in that you can use it for all kinds of things, e.g. code coverage, perf testing, stress testing, etc, including API usability.

P.S. If we fix the atmosphere, clean up the water, stop polluting the soil and learn to live in harmony with our environment, what's to motivate us to move off this rock before we lose our aggressive drive and then, when we're sipping Mai Thais, the sun explodes? Consuming this planet until nothing's left but an empty husk and we're forced, like locusts to move on to the next one, may well be the only thing that keeps our species alive (assuming we survive the coming ice age, of course).

757 older posts No newer posts